This guide walks you through setting up GPU infrastructure on Porter, from creating a GPU node group to deploying your first GPU-accelerated application. You can deploy GPU-enabled workloads on Porter by creating a fixed node group and selecting a GPU-enabled instance type. Note that this has to be the second node group in your cluster as the default node group is reserved for CPU workloads.Documentation Index

Fetch the complete documentation index at: https://porter-mintlify-p5-instances-1778694210.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

GPUs are only supported on fixed node groups because cost optimization does not currently support GPU instances. This means you select a specific GPU instance type and Porter scales by adding or removing instances of that exact type.

Creating a GPU Node Group

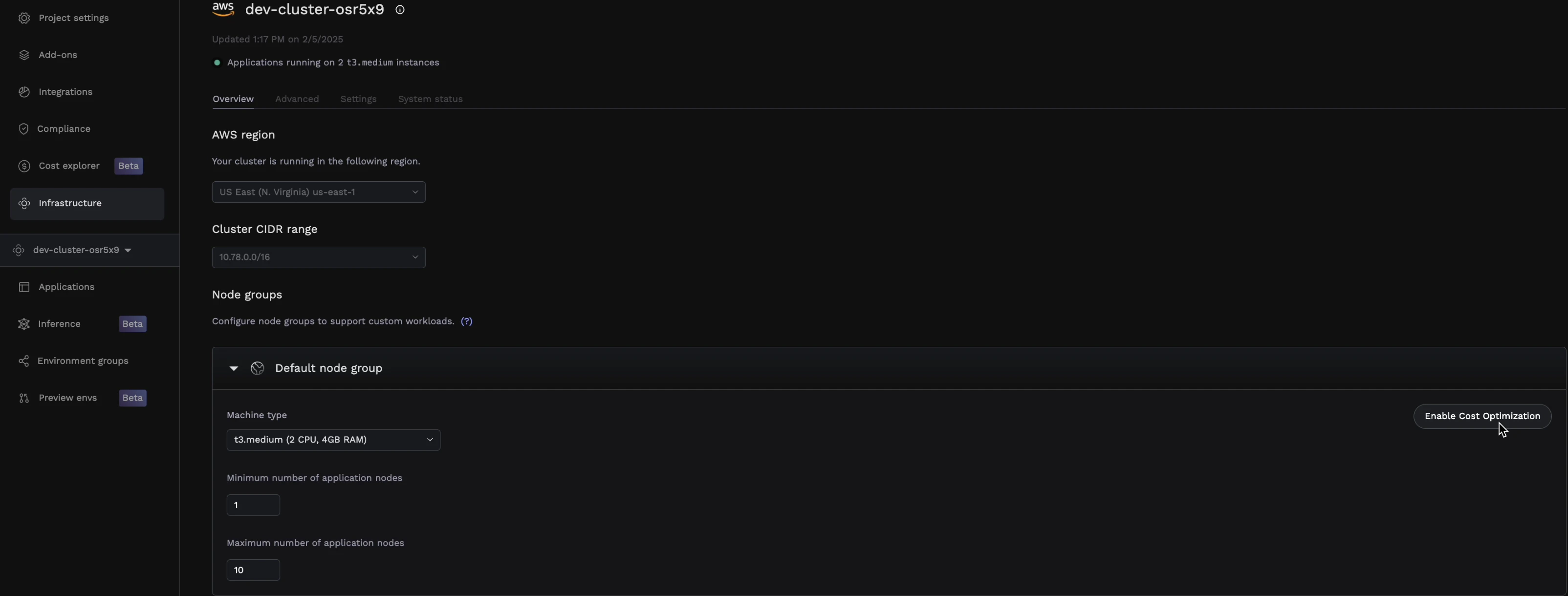

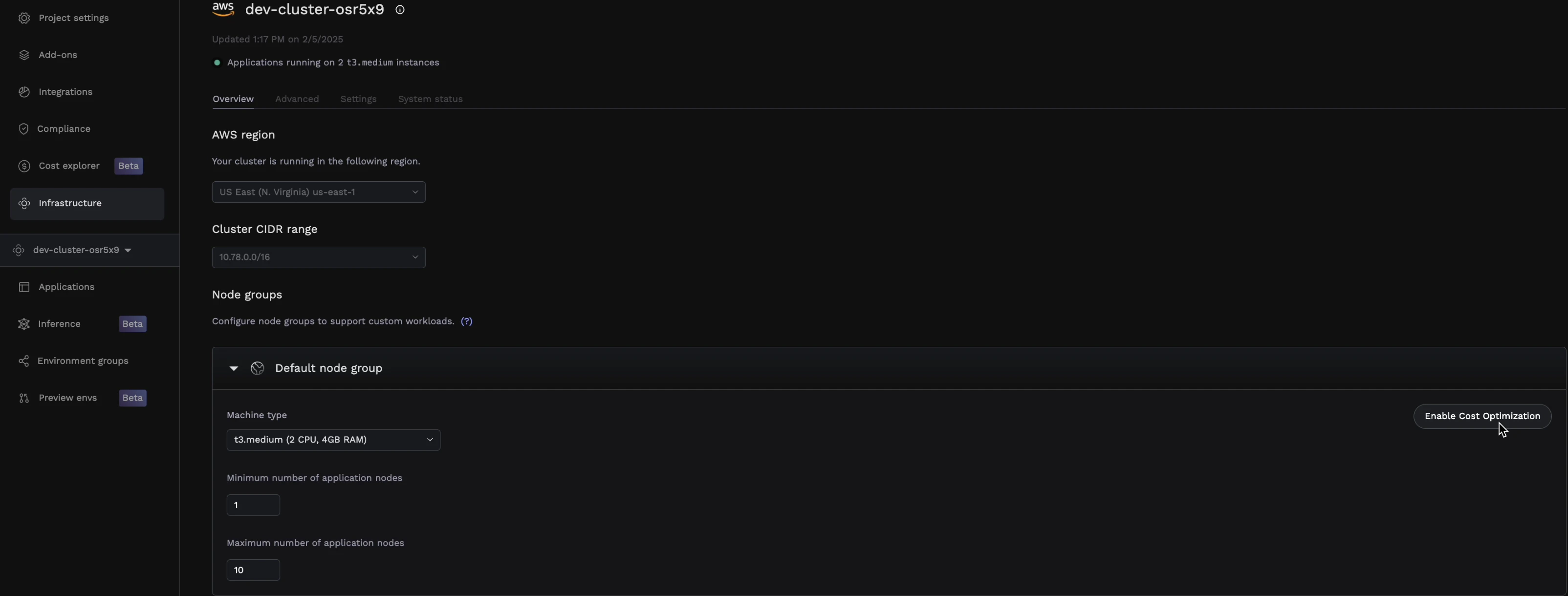

Navigate to Infrastructure

From your Porter dashboard, click on the Infrastructure tab in the left sidebar.

Configure the GPU node group

Configure your GPU node group with the following settings:

| Setting | Description |

|---|---|

| Instance type | Select a GPU-enabled instance type (see Supported GPU instance types below) |

| Minimum nodes | Select minimum number of nodes that will be available at all times |

| Maximum nodes | The upper limit for autoscaling based on demand |

Supported GPU instance types

Porter supports a range of NVIDIA GPU instance types on AWS. Choose the instance that matches your workload’s compute, memory, and VRAM requirements.| Instance type | vCPUs | RAM | GPUs | GPU type | GPU memory |

|---|---|---|---|---|---|

g4dn.xlarge | 4 | 16 GiB | 1 | NVIDIA T4 | 16 GB |

p3.2xlarge | 8 | 61 GiB | 1 | NVIDIA V100 | 16 GB |

p4d.24xlarge | 96 | 1,152 GiB | 8 | NVIDIA A100 | 320 GB |

p5.4xlarge | 16 | 256 GiB | 1 | NVIDIA H100 | 80 GB |

p5.48xlarge | 192 | 2 TiB | 8 | NVIDIA H100 | 640 GB |

p5e.48xlarge | 192 | 2 TiB | 8 | NVIDIA H200 | 1,128 GB |

p5en.48xlarge | 192 | 2 TiB | 8 | NVIDIA H200 | 1,128 GB |

The full p5 family (

p5.4xlarge, p5.48xlarge, p5e.48xlarge, and p5en.48xlarge) is suited for large-scale training and inference of foundation models. Use p5.4xlarge for single-GPU H100 workloads, and the p5e/p5en variants when you need H200 GPUs with expanded VRAM for larger models. Availability varies by region — check the AWS console for the latest region support.Deploying a GPU Application

Once your GPU node group is ready, you can deploy applications that use GPU resources.Create or select your application

Navigate to your application in the Porter dashboard, or create a new one if you haven’t already.

Select the service

Click on the service that needs GPU access (e.g., your inference worker or training job).

Assign to the GPU node group

Under General, find the Node group selector and choose your GPU node group from the dropdown.

Configure GPU resources

Under Resources, configure your GPU requirements:

| Setting | Recommended Value |

|---|---|

| GPU | Number of GPUs needed (typically 1) |

| CPU | Match your workload needs (GPU instances have fixed CPU/RAM ratios) |

| RAM | Match your workload needs |

Request only the GPUs you need. Each GPU request reserves an entire GPU.

Troubleshooting

Pod stuck in Pending state

Pod stuck in Pending state

This usually means there are no available GPU nodes. Check:

- The node group has scaled up (check Infrastructure → Cluster)

- Your GPU request doesn’t exceed available GPUs on the instance type

- The node group maximum nodes hasn’t been reached

Out of memory errors

Out of memory errors

GPU memory errors indicate your model or batch size is too large:

- Reduce batch size

- Use a larger GPU instance with more VRAM

Slow cold starts

Slow cold starts

GPU nodes take longer to start than CPU nodes due to driver initialization:

- Keep minimum nodes at 1 for latency-sensitive workloads

- Consider keeping a warm pool of nodes during peak hours